Keep up with trends, product updates & more from Azira.

For information about how Near Inc. handles your personal data, please see our privacy policy.

We’re thrilled to announce that Azira has been named the “Data Intelligence Platform of the Year” in the prestigious 5th annual Data Breakthrough Awards program. The Data Breakthrough Awards are highly esteemed in the data technology industry recognizing innovators and leaders from around the world in various categories, including data analytics, management, and infrastructure. This […]

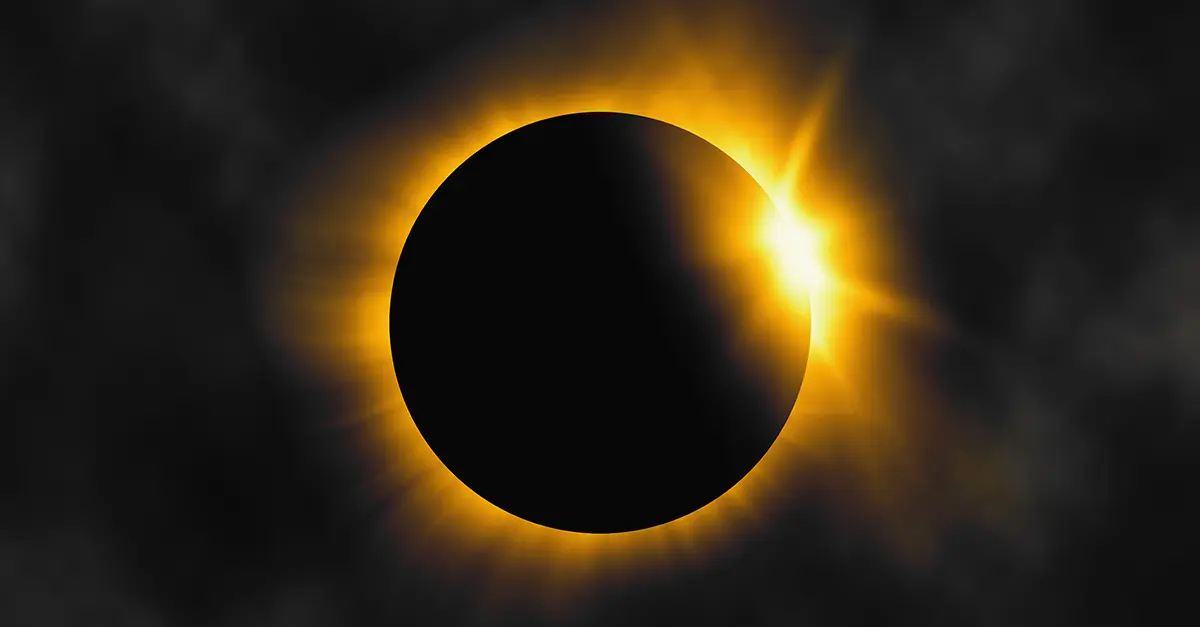

Turns out the Big Event of 2024 didn’t even happen on our own lonely planet. We’re talking, of course, about the Total Solar Eclipse of April 8. If you are…

We’re thrilled to announce that Azira has been named the “Data Intelligence Platform of the Year” in the prestigious 5th annual Data Breakthrough Awards program. The Data Breakthrough Awards are…

At the beginning of this month, we introduced our new company: Azira. This transformation marked a significant milestone in our journey, and I am honored to share this momentous news…

Taylor Swift’s highly anticipated Eras tour recently swept through Australia with a series of mesmerizing performances, captivating audiences across Sydney and Melbourne. As Swifties flocked to these two venues, Azira’s…